Blog

Measurement Principles

Measurement Principles

Measurement is a fundamental practice in science, engineering, and everyday life. It involves quantifying physical quantities and properties to enhance understanding, facilitate analysis, and support decision-making. The integrity, reliability, and consistency of measurements critically depend upon adherence to certain fundamental principles, understanding the physical bases of measurement, recognising factors influencing measurement reliability, and systematically addressing errors and uncertainties in measurement.

Physical Principles of Measurement

Measurement practices fundamentally depend on understanding and applying physical laws governing the phenomena or attributes being measured. Different physical phenomena often rely upon particular measurement techniques. Commonly, measurements can involve principles from mechanics, electromagnetism, thermodynamics, optics, and acoustic physics. For instance:

- Mechanics: Measurements of mass, length, time, force, velocity, displacement, and acceleration heavily rely on classical mechanical principles articulated by Newton’s laws of motion and principles of dynamics.

- Electromagnetism: Electric current, voltage, electric and magnetic fields, resistance, capacitance, and inductance measurements are grounded in principles described by Maxwell’s equations and the laws of electromagnetism.

- Thermodynamics: Temperature, heat, pressure, and related thermal properties rely on the laws of thermodynamics, such as the zeroth, first, second, and third laws, giving rise to temperature scales and thermal expansion properties.

- Optics and Acoustics: Optical measurements involve wave propagation, reflection, refraction, diffraction, polarisation, and interference, while acoustic measurements investigate sound propagation, frequency, wavelength, and intensity relying upon wave mechanics and fluid dynamics principles.

These fundamental physical principles allow scientists and engineers to develop consistent and standardised measurement tools and units, ensuring repeatability, comparability, and broad applicability of measurement practices.

Accuracy, Precision, and Calibration

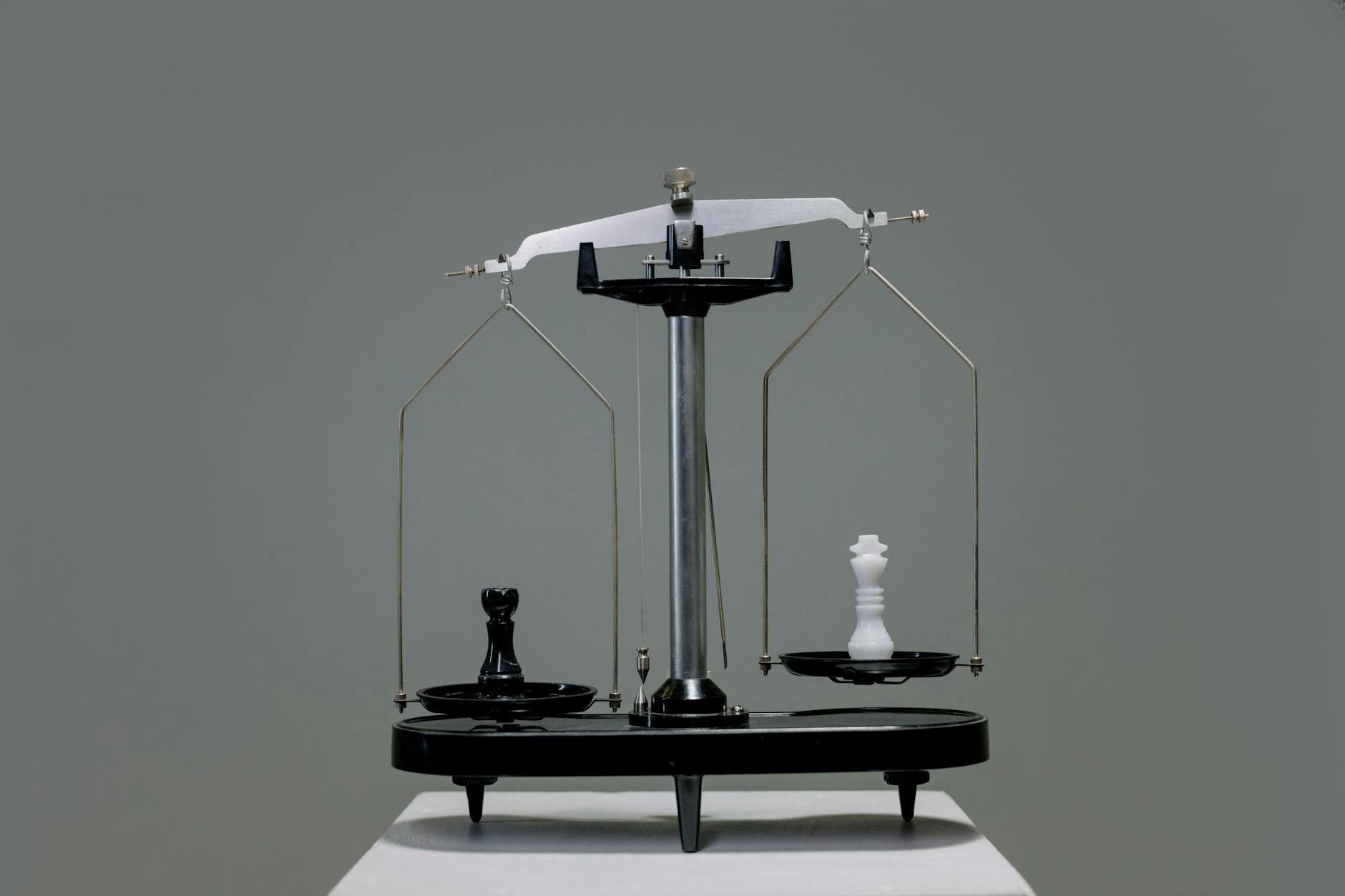

An effective measurement system must be reliable in delivering consistent and correct results. Three closely interconnected concepts frame this reliability: accuracy, precision, and calibration.

Accuracy

Accuracy reflects how closely a measured value approaches the true or actual value. Highly accurate measurements demonstrate minimal deviation from accepted standard values or reference standards. High accuracy signifies minimal systematic errors. Measurement accuracy significantly depends upon the quality of the instruments used, appropriateness of measurement methods, skill of the person conducting the measurement, and external conditions (temperature, humidity, etc.).

Precision

Precision describes the consistency or repeatability of measurements obtained under identical conditions. Highly precise measurements yield values closely clustered together upon repeated measurements. Precision relates directly to random errors, with more precise results displaying minimal scatter irrespective of their accuracy. An instrument or system can be precise yet inaccurate if it consistently and systematically deviates from actual values. Thus, high precision does not inherently guarantee high accuracy.

Calibration

Calibration ensures the accuracy and reliability of measurement equipment by periodically comparing measurements against recognised standards of known accuracy. It identifies systematic biases or drifts in the measuring device and provides a means to rectify them through adjustments or correction chains. With regularly calibrated instruments, measurement results become more trustworthy and aligned closely with accurate, recognised standards, thereby reducing measurement uncertainty and enhancing confidence in measurement-derived conclusions and decisions.

Types of Errors and Uncertainty in Measurements

Measurement inherently contains errors, broadly divided into systematic errors and random errors, leading inevitably to a certain degree of uncertainty.

Types of Errors

- Systematic Errors: These are consistent and reproducible errors arising primarily from faults in the measuring equipment, incorrect calibration procedures, and/or persistent environmental conditions. Systematic errors follow predictable patterns; thus, they are correctable to some extent through proper calibration, method adjustment, or equipment maintenance. Examples include instrument zero errors, calibration shift, environmental interference, and consistent observational biases.

- Random Errors: Unlike systematic errors, random errors occur unpredictably, causing measurement variability. Sources include operator interpretation variations, minor instrument instabilities, external fluctuations, or environmental noise. These errors manifest as measurement scatter around a mean value and are usually analysed statistically. Reducing random errors can involve repeated measurements, improved instrument design, sufficient averaging, and controlled experimental environments.

- Gross Errors (Blunders): Gross errors generally result from human mistakes or faulty equipment operations. They often represent significant deviations from other measurements and usually are identifiable post-measurement through careful review, validation, and inherent low probabilities of occurrence.

Uncertainty in Measurement

Measurement uncertainty quantifies doubt about the exactness of measurement results. It provides a comprehensive indication of how reliably the measured value represents the true value. Uncertainty stems from systematic and random errors combined. The magnitude of uncertainty affects decision-making and interpretation of measured values; consequently, accurately quantifying uncertainty is crucial. There are two main classifications:

- Type A Uncertainty: Arising from statistical analysis of repeated measurements (random variability). It is assessed quantitatively through statistical methods, expressing uncertainty as standard deviation, standard error, or specified confidence intervals.

- Type B Uncertainty: Derived from informed judgements using experience, calibration certificates, previous data, manufacturer specifications, or reference literature. Type B uncertainties typically represent systematic uncertainties due to calibration, equipment specifications, and published tolerance values where statistical treatment on repeated measurements isn’t possible or practical.

An international standard practice for expressing measurement uncertainty is indicated in the Guide to the Expression of Uncertainty in Measurement (GUM), jointly published by scientific standardisation bodies and globally recognised for standard uncertainty methodological assessment.

Conclusion

The robustness and reliability of measurement critically depend upon understanding fundamental measurement principles. Accurately measuring physical quantities requires a deep understanding and application of physical laws, coupled with acute attention to accuracy, precision, and periodic calibration efforts. Systematic recognition and correction efforts toward errors and uncertainty further enhance reliability.

Awareness and implementation of these measurement fundamentals profoundly advance quantitative science and technology, translating measured data into meaningful, credible, and actionable information. Ultimately, detailed attention to measurement principles enhances quality control, bolsters research credibility, supports informed engineering design, and ensures effective data-driven decision-making across diverse fields.